Currently, I am enrolled in Northeastern University’s Experience Design Master’s Program and one of my classes is “Design for Behavior/Experience”. In our first Behavior Design project, entitled Motion Graphics, I explored the concept of “Choreographic Objects”, a term invented by famed choreographer and artist William Forsythe. Forsythe described his concept as follows: “The introduction of the term ‘choreographic object’ is intended as a categorizing tool that can help identify sites within which to locate the understanding of potential organization and instigation of action-based knowledge” (Neri, 49).

Inspired by Forysthe’s “City of Abstracts” video art installation at Boston’s Institute of Contemporary Art, I sought to create an interactive piece of digital art that provides participants a heightened awareness of their body and its movements. “City of Abstracts” is a video-feedback wall that presents viewers with images of themselves, however these images have various delay and distortion effects that alter, accentuate, and transform the movement of the participants on the screen.

This unexpected distortion of the image encourages participants to experiment with body movement in order to see the various effects playing out. In this vein, I decided to create a choreographic object that would create an “awareness” in participants of how their physical movements and gestures could be used to create digital art. Our goal was to examine how an artifact can direct people to perform actions with their bodies as well as how it can encourage or discourage certain behaviors.

Forsythe says “The Choreographic Objects are neither primarily optical nor purely aesthetic, but conceived as a set of problems and relationships- a ‘combination of perceptual systems’” (Neri, 13). It was this “combination” of the senses that we wanted to explore by using a Kinect motion sensor to transform body actions into kaleidoscopic images. Louise Niri explains that “A principle feature of a choreographic object is the preferred outcome is a form of knowledge production for whoever engages with it, engendering an acute awareness of the self within specific action schemata” (49).

My artifact encouraged this knowledge production by requiring participants to move in space with their body and arms to learn what positions, planes of movement, and gestures would generate a response. Quickly one learned that certain spaces were more active in producing imagery and certain gestures were required for various effects.

Niri goes on to say that, “A choreographic object is, by nature, open to a full range of unmediated perceptual instigations…These objects are examples of specific physical circumstances that isolate fundamental classes of motion activation and organization” (49). It was found that due to the way we programmed the inputs for this experiment (using hand position as input), certain movements were optimal in producing rich imagery while others were not. This quickly trained the participants to make sweeping hand gestures and explore the active area by moving left and right, and even up and down via standing and squatting.

The visualization itself was creating with the Processing visualization framework as well as the Kinect SDK 2.0 and the KinectPV2 library.

At first I created a Kaleidoscope generator that just took the mouse X/Y position as input so I could get that part working.

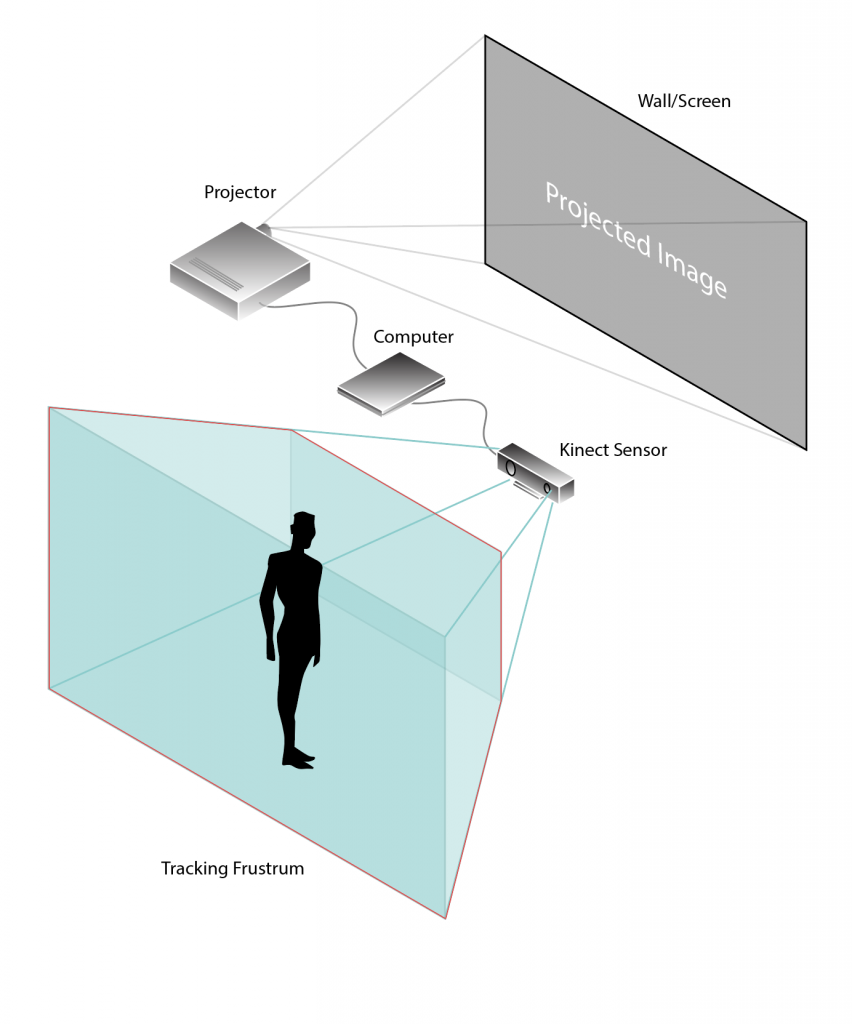

Once I was satisfied with the overall behavior using the mouse, I used the KinectPV2 library to integrate my Kinect sensor with the Processing application. The system was set up with a Kinect Sensor, a Surface tablet, and a LED projector:

The overall mapping of gestures to input was:

- Right hand X – Shape X position

- Right hand Y – Shape Y position

- Right hand Z – Shape color / size

- Left hand X/Y- Shape velocity/vector

It took a bit of experimentation and iteration to figure out how to translate the X/Y/Z positions available on both the right and left hands via the Kinect body tracking to coordinates that provided satisfactory results for the device. Overall, I was fairly happy with the results, especially considering this was a quick, two-week sketch of a project.

Going forward, I would like to explore these concepts further by connecting more parts of the body to different properties of the software (for example, perhaps the right and left knees control the shape’s Y and Z rotation, etc) to create a richer language of interaction for participants and allow them to explore the concept of action-based knowledge. In any case, I had a lot of fun getting familiar with the Processing framework and learning more about motion-tracking in my first Kinect-based project.